The efficiency gains from automating junior work are real and measurable. The cost to future expertise is equally real, and almost never in the model

Every senior engineer, every VP R&D, every technical leader in your organization learned their craft the same way: by doing work that someone more experienced could have done faster.

They reviewed code that a principal engineer could have reviewed better. They debugged issues that a staff engineer would have spotted immediately. They sat in meetings where they understood maybe 60% of what was discussed, and slowly, over years, that percentage climbed.

This is the apprenticeship model. It's inefficient by design. And AI is about to dismantle it.

The Efficiency Trap

The logic seems sound: if AI can handle code reviews, documentation, testing, basic analysis, and first-pass problem-solving, why pay junior salaries for work that a model does faster and cheaper?

I see this calculation happening across R&D organizations right now. The 95% of hi-tech workers already using AI tools are increasingly handling tasks that used to belong to entry-level employees. The work gets done. The headcount stays flat. The CFO is pleased.

But here's what the efficiency calculation misses: those "junior tasks" weren't just production work. They were training data for humans.

When a junior engineer reviews code, they're not just catching bugs. They're learning to read other people's thinking. When they document a system, they're building mental models of architecture. When they debug an issue that takes them four hours instead of the senior's forty minutes, they're developing the pattern recognition that will make them valuable in five years.

Remove those tasks, and you remove the pathway.

The Pattern

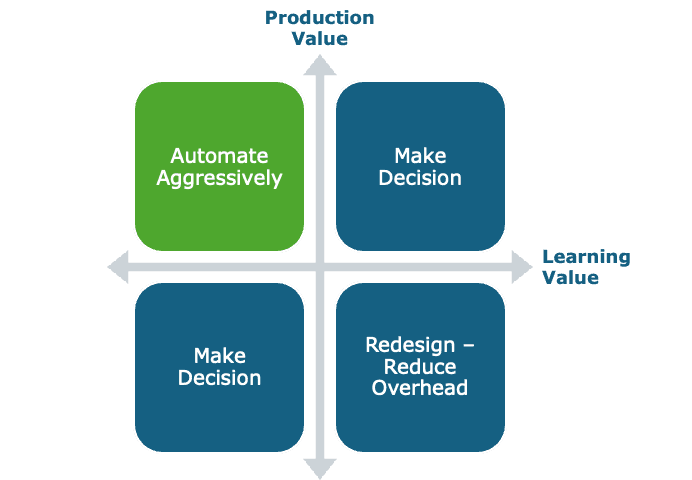

Across organizations implementing AI in their R&D operations, I see a consistent blind spot. Leaders evaluate tasks on a single axis: production value. Can AI do this faster? Cheaper? More consistently? If yes, automate.

What's missing is the second axis: learning value. Some tasks exist primarily to generate output. Others exist to generate capability. Many do both. The failure to distinguish between these categories creates a strategic problem that won't surface for three to five years, exactly when you need a new generation of senior talent and discover you never developed one.

What You Need To Do

For each category of work being considered for automation, you should assess:

Production value: How much does this task contribute to immediate deliverables? What's the cost of human execution versus AI execution? What's the quality differential?

Learning value: What skills or judgment does this task develop? At what career stage is this learning most critical? How long does it take for a human to progress beyond needing this task for development?

The output is a four-quadrant view:

High production, low learning? Automate aggressively. Low production, high learning? Redesign the task to maintain learning while reducing overhead. High on both? This is where the real strategic thinking happens.

For high-production, high-learning tasks, design AI augmentation that keeps humans in the loop at the learning-critical moments.

The AI might do the first pass, but the junior engineer reviews the AI's output, explains the reasoning, catches the edge cases the model missed. The task takes longer than full automation. It also produces engineers who can actually think.

The Questions R&D Leaders Should Be Asking

When evaluating AI implementation for any task currently performed by junior team members, the strategic questions are:

Where did our current senior engineers learn to do what they do? Trace the actual pathway.

Which early-career tasks built which capabilities?

If we automate this task, what replaces it as a learning mechanism?

What percentage of our junior hires' work is primarily developmental versus primarily productive?

How long until this matters?

The Uncomfortable Math

The junior talent question is a second-order effect, It won't show up in your Q3 metrics. It will show up when you try to hire a senior engineer in 2030 and discover the market has far fewer candidates with deep, hard-won expertise.

The organizations getting this right aren't avoiding AI. They're implementing it with a dual-purpose lens, optimizing for both current output and future capability development. They're treating junior employee learning pathways as infrastructure, not overhead.

What This Means Practically

I'm not arguing against automating entry-level work. I'm arguing for doing it strategically, with explicit answers to the question: how do we develop the next generation of experts?

For some tasks, the answer is full automation with no replacement needed. For others, it's AI augmentation that preserves human learning moments. For a critical few, it might mean deliberately protecting certain tasks from automation, accepting inefficiency today to ensure capability tomorrow.

The worst outcome is automating by default, celebrating the efficiency gains, and discovering five years later that you've optimized yourself into a capability crisis.