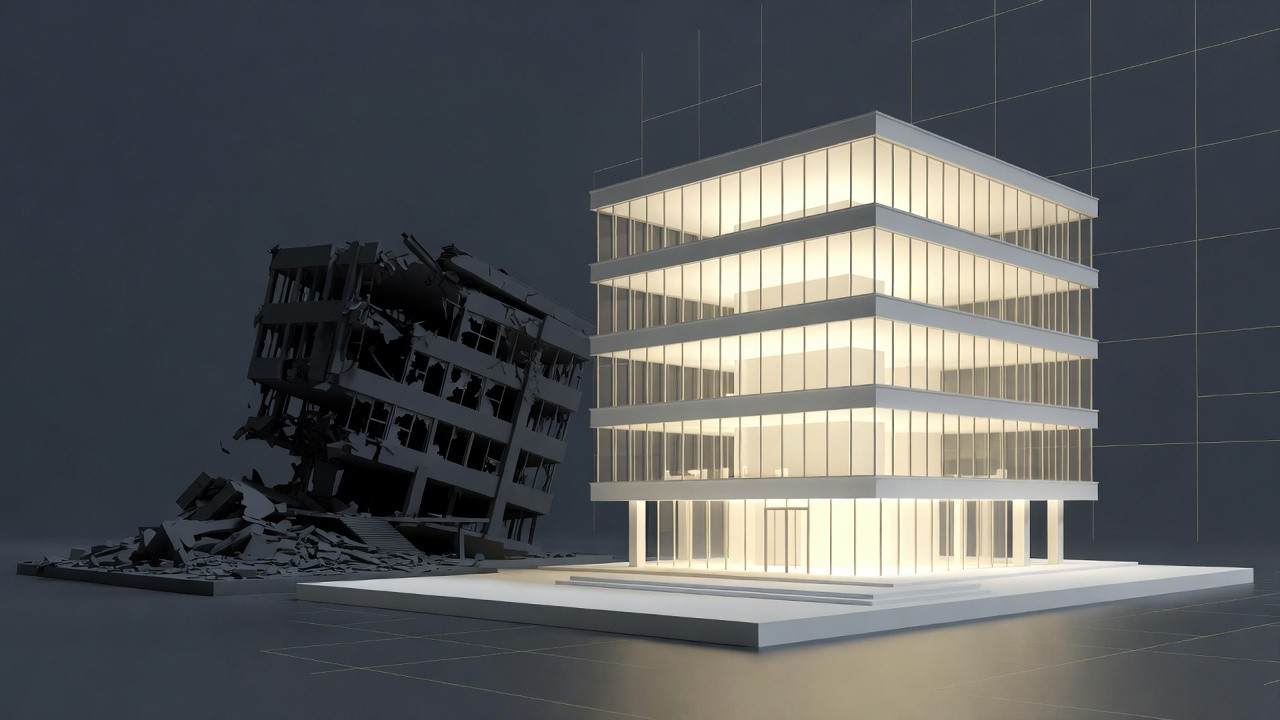

Every VP R&D has run at least one AI pilot that produced a great presentation and zero operational change. This isn't a technology problem, It's a pilot execution problem., and it has a solution.

Every VP R&D has run at least one AI pilot that produced a great presentation and zero operational change. Many have run several. The decks look similar: productivity metrics that were loosely defined, a few impressive demos, a recommendation to "scale thoughtfully”, and then a slow drift back to how things were.

This isn't a technology problem. It's a pilot execution problem. And it has a solution.

After running AI implementation programs across R&D organizations, I've identified what separates pilots that permanently change how teams work from pilots that fade after the kickoff enthusiasm dies.

The difference isn't the tool, the budget, or the team's technical sophistication. It's the structure of the pilot itself.

Start from the Decision, Not the Technology

Most pilots are designed forward: we have this tool, let's see what it can do. The pilots that work are designed backward:

What do we want to achieve with this pilot? We should make a go/no-go decision by the end of the pilot, what we expect will happen and how can it be measured?

This distinction sounds subtle. The operational implications are significant.

When you start from the decision, you're forced to answer three questions before day one:

Which single workflow are we targeting? Not "development productivity" in general, but specifically: PR review cycle time for Team X, or first-response time for Tier-1 support issues. The more specific the workflow, the cleaner the signal.

Who owns the decision? Someone needs to be named, before the pilot starts, as the person who will say yes or no by the end of the pilot. If this person isn't identified upfront, the pilot will end in consensus-seeking that produces no decision. I've seen pilots extend for six months because nobody had explicit authority to move forward or kill them.

What's your criteria? Write down the specific number that would cause you to stop: "If ticket cycle time doesn't decrease by at least 15% for the pilot team, we don't proceed." This does two things: it forces honest measurement from the start, and it creates organizational permission to stop. Without a measurable criteria, pilots become zombies, consuming attention and budget while producing nothing.

Manage the Adoption Friction, Not Just Output

Here is where most pilots miss the most important signal they could collect.

A pilot will typically measure whether the AI tool produces good outputs. Did the code it generated work? Were the summaries accurate? Did the test coverage improve? These are necessary metrics. They're not sufficient ones.

What the pilot needs to also measure is adoption friction: how much resistance does the tool create in actual daily use? This is harder to quantify but more predictive of rollout success than output quality.

In practice, this means tracking things like: What percentage of the pilot team uses the tool consistently after the first week (not just the enthusiasts)? Where in the workflow do people revert to the old way? What complaints come up repeatedly? Which team leads are actively promoting it versus quietly ignoring it?

I've seen tools with excellent output quality fail at rollout because the adoption friction was invisible during the pilot. Senior engineers felt their expertise was being bypassed. Team leads weren't sure how to evaluate AI-assisted work. Security review processes weren't designed to handle the volume or format of AI-generated code.

These aren't technology problems. They're organizational problems that the pilot is your only structured opportunity to see before they become expensive at scale.

Build Capability Transfer Into the Pilot Design

The other thing missing from most pilots: a plan for what happens to the knowledge after it ends.

The typical pilot team is made up of volunteers, usually the engineers most interested in AI. They become highly competent with the tool over 30 days. Then the pilot ends, and the organization faces a rollout where most people are starting from zero. The learning doesn't transfer automatically.

Pilots that successfully scale build capability transfer into their design from day one. This means documenting what works as you go, not as a retrospective exercise. It means rotating people through the pilot team if possible. It means the pilot team's deliverable isn't just a go/no-go recommendation but a starter playbook for the teams that come next.

Capability transfer isn't an add-on. If you skip it, a successful pilot creates a bottleneck: you've proven the value, but you've concentrated the knowledge in five people who are now overwhelmed with requests to help everyone else.

The Last Day Deliverable

If you've done this right, the last day looks different from the typical pilot review.

Instead of a deck full of potential, you have a clean data set against a predefined expectations. Either the metric moved enough to justify proceeding, or it didn't. Either adoption was strong enough to suggest broader rollout will work, or there are specific friction points that need to be addressed first.

The decision-maker you named on day one reviews the data against the criteria you defined on day one. They make a call. The organization moves.

This is what I mean when I say the methodology matters more than the tool. Every AI pilot has a tool at its center. The ones that change how your organization actually works have a decision architecture around that tool, built before the first line of code runs.

The technology is the easy part. Designing the experiment properly is where the real work is.

*NativeAI helps hi-tech companies design AI implementation programs that produce operational change, not just evidence of potential. If you are planning an AI pilot and want it to actually move the needle, contact us.